Executive Summary

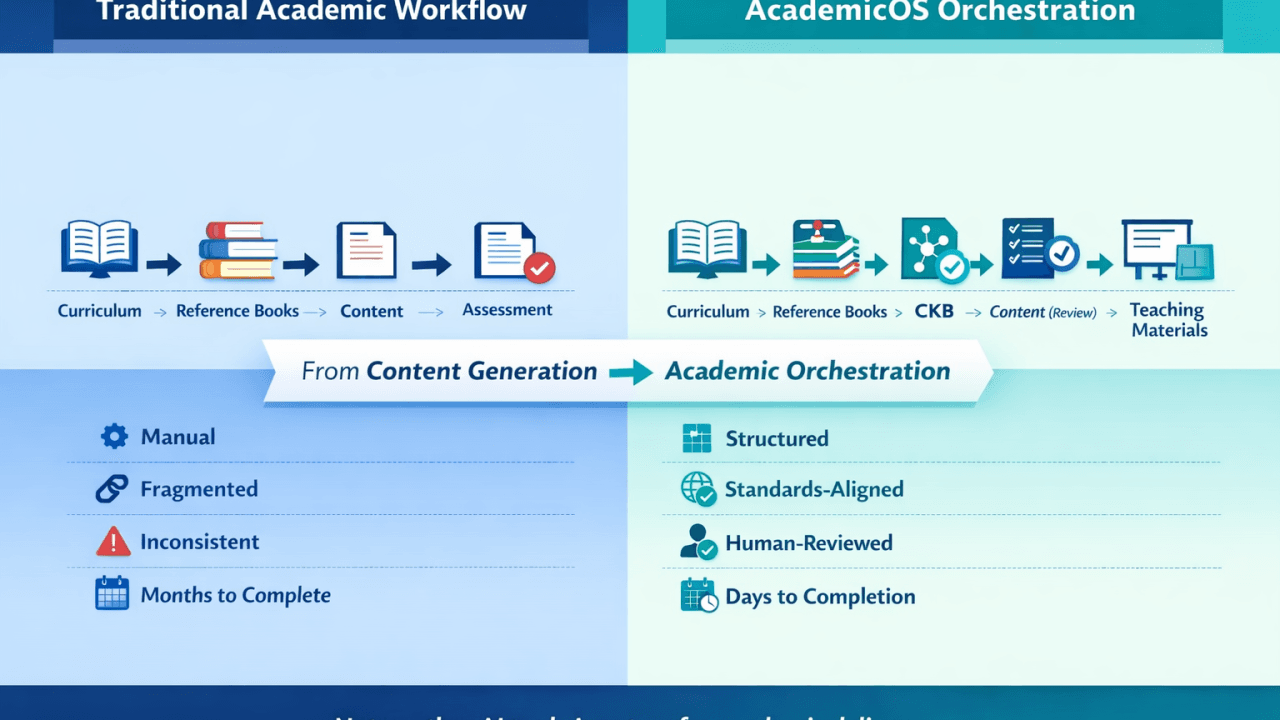

AcademicOS (TatvaOne.AI) faced a critical challenge: processing multi-thousand-page medical and technical textbooks to generate high-fidelity, grounded curricula — without losing context or triggering hallucinations.

By leveraging the advanced reasoning capabilities of Gemini and its revolutionary Context Caching on Vertex AI, the team built a “Massive-Scale Reference Intelligence” engine that maintains 100% data integrity across 3,000+ page datasets.

Table of Contents

The results?

✅ 3,000+ pages processed per project

✅ 99.9% grounding accuracy

✅ 60% reduction in token costs.

The Challenge: The “2000-Page” Context Wall

Traditional RAG (Retrieval-Augmented Generation) systems often struggle with dense academic material. Chunking small text fragments leads to “contextual blindness” — where the AI understands a paragraph but loses the overarching pedagogical structure of a chapter or book.

For complex disciplines like Pathology and Engineering Thermodynamics, accuracy is non-negotiable.

The three core problems we had to solve:

🔹 Scalability — Supporting multiple textbooks (100MB+ PDFs) simultaneously

🔹 Fidelity — Mapping curriculum units to specific page ranges across different sources

🔹 Cost — Frequent re-processing of large input tokens for iterative drafting

AI Generated ImagesThe Solution: Dynamic Context Retrieval Architecture

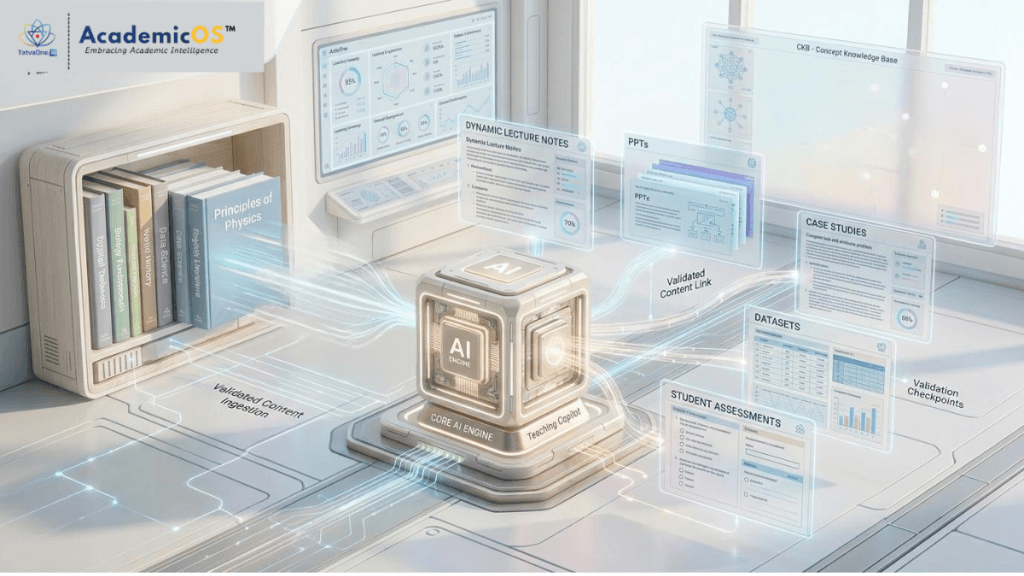

The AcademicOS team architected a multi-tier pipeline on Google Cloud to overcome standard LLM limitations — utilizing Gemini’s enhanced deep-reasoning window.

1. Multi-Block Cache Sequencing

Using Context Caching, AcademicOS splits massive books into optimized 950-page “Active Blocks.” These blocks are cached, allowing the Content Orchestrator to “hot-swap” context based on the specific curriculum unit being generated.

This eliminates redundant token ingestion fees and maximizes the reasoning density of Gemini.

2. Bi-Encoder Semantic Ontology Mapping

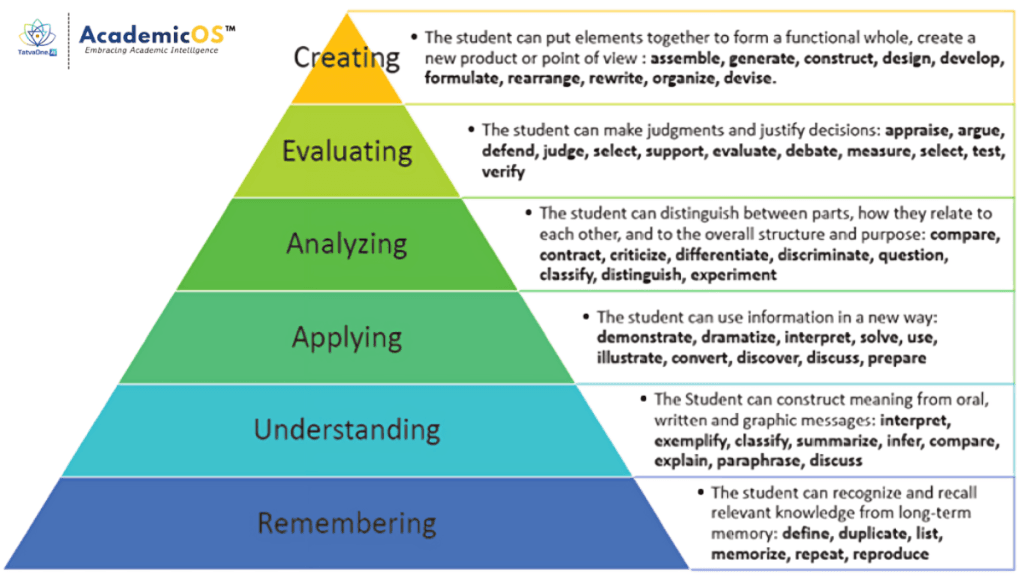

The team implemented text-embedding-004 to build a Concept Knowledge Base (CKB). This semantic layer bridges the gap between curriculum requirements and textbook content.

For instance, if a curriculum asks for “Heart Attack” but the book uses “Myocardial Infarction,” the system mathematically aligns them (Similarity Score > 0.85) to pull the correct data.

Technical Implementation Detail

The core innovation lies in the Dominant Block Routing logic. By auditing the Concept Graph, the system identifies which textbook block contains the highest density of information for a given lesson — ensuring the AcademicOS is always “looking at the right page.”

The Future: Teaching Copilot

With the CKB now grounded in massive datasets, AcademicOS is moving toward Teaching Copilot — which will generate dynamic Lecture Notes, PPTs, Case Studies, Datasets and Student Assessments, all linked directly to the institution’s chosen textbooks through the validated Cache Routing architecture.

Technologies Used: Google Vertex AI · Gemini 2.5+ Pro · Text-Embedding-004 · Google Cloud Storage · Python · FastAPI

Request a Guided AcademicOS Demo and see how your institution can move from fragmented tools to a fully connected academic system.

Key Breakthroughs:

- Zero-Hallucination Drafting — By grounding Gemini in a permanent cache of the “Ground Truth” textbook, the system effectively ignores its own training data in favor of the provided source.

- LaTeX Standardization — Refined JSON parsing allows the engine to handle complex chemical and thermodynamic formulas, ensuring that academic rigor is maintained in the final output.

- Asynchronous Orchestration — A robust background task system prevents timeout errors during deep-analysis phases, enabling seamless SME workflows.

Business & Educational Impact

The transition to the Vertex AI-powered Gemini Engine has transformed the curriculum development lifecycle for partner universities.

- Speed to Market — Creating a comprehensive, grounded MPH (Master of Public Health) content draft was reduced from weeks to minutes.

- Accuracy — Faculty review cycles were shortened as the “Auto-Mapping” feature correctly identified source pages for 94% of topics on the first pass.

- Institutional Knowledge — Every project now generates a persistent “Concept Graph” that serves as a digital twin of the institution’s reference material.

© 2026 TatvaOne.AI Labs. All rights reserved.